Closing Down Another Attack Vector

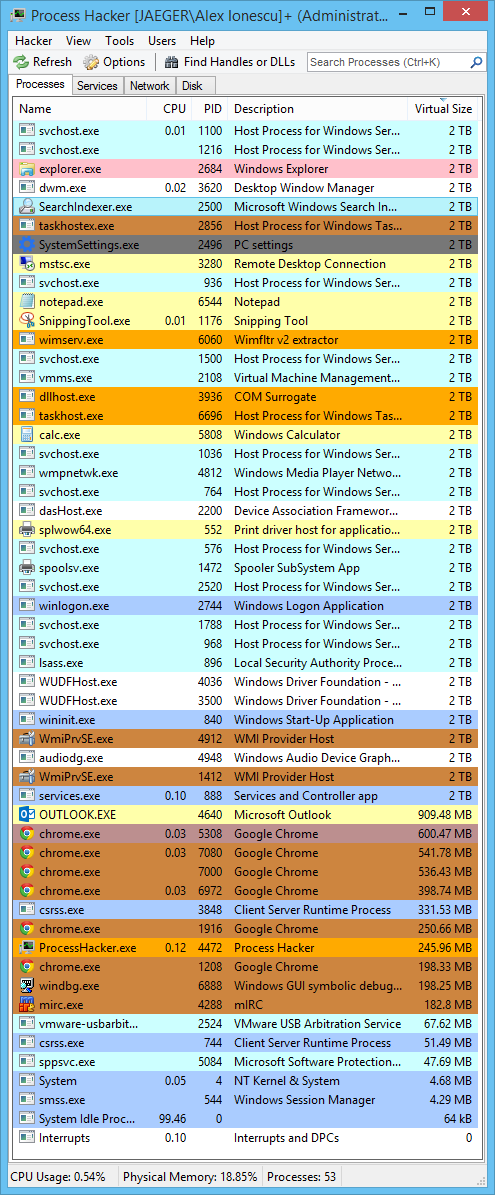

As the Windows kernel continues to pursue in its quest for ever-stronger security features and exploit mitigations, the existence of fixed addresses in memory continues to undermine the advances in this area, as attackers can use data corruption vulnerabilities and combine these with stack and instruction pointer control in order to bypass SMEP, DEP, and countless other architectural defense-in-depth techniques. In some cases, entire mitigations (such as CFG) are undone due to their reliance on a single, well-known static address.

In the latest builds of Windows 10 Redstone 1, aka “Anniversary Update”, the kernel takes a much stronger toward Kernel Address Space Layout Randomization (KASLR), employing an arsenal of tools that can only be available to an operating system developer that also happens to own the world’s most commercially successful compiler, and the world’s most pervasive executable object image format.

The Page Table Entry Array

One of the most unique aspects of the Windows kernel is the reliance on a fixed kernel address to represent the virtual base address of an array of page table entries that describes the entire virtual address space, and the usage of a self-referencing entry which acts as a pivot describing the page directory for the space itself (and, on x64 systems, describing the page directory table itself, and the page map level 4 itself).

This elegant solutions allows instant O(1) translation of any virtual address to its corresponding PTE, and with the correct shifts and base addresses, a conversion into the corresponding PDE (and PPE/PXE on x64 systems). For example, the function MmGetPhysicalAddress only needs to work as follows:

PHYSICAL_ADDRESS

MmGetPhysicalAddress (

_In_ PVOID Address

)

{

MMPTE TempPte;

/* Check if the PXE/PPE/PDE is valid */

if (

#if (_MI_PAGING_LEVELS == 4)

(MiAddressToPxe(Address)->u.Hard.Valid) &&

#endif

#if (_MI_PAGING_LEVELS >= 3)

(MiAddressToPpe(Address)->u.Hard.Valid) &&

#endif

(MiAddressToPde(Address)->u.Hard.Valid))

{

/* Check if the PTE is valid */

TempPte = *MiAddressToPte(Address);

...

}

Each iteration of the MMU table walk uses simple MiAddressTo macros such as the one below, which in turn rely on hard-code static addresses.

/* Convert an address to a corresponding PTE */

#define MiAddressToPte(x) \

((PMMPTE)(((((ULONG)(x)) >> 12) << 2) + PTE_BASE))

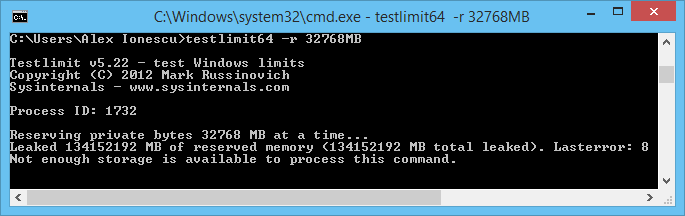

As attackers have figured out, however, this “elegance” has notable security implications. For example, if a write-what-where is mitigated by the existence of a read-only page (which, in Linux, would often imply requiring the WP bit to be disabled in CR0), a Windows attacker can simply direct the write-what-where attack toward the pre-computed PTE address in order to disable the WriteProtect bit, and then follow that by the actual write-what-where on the data.

Similarly, if an exploit is countered by SMEP, which causes an access violation when a Ring 0 Code Segment’s Instruction Pointer (CS:RIP) points to a Ring 3 PTE, the exploit can simply use a write-what-where (if one exists), or ROP (if the stack can be controlled), in order to mark the target user-mode PTE containing malicious code, as a Ring 0 page.

Other PTE-based attacks are also possible, such as by using write-what-where vulnerabilities to redirect a PTE to a different physical address which is controlled by the attacker (undocumented APIs available to Administrators will leak the physical address space of the OS, and some physical addresses are also leaked in the registry or CPU registers).

Ultimately, the list goes on and on, and many excellent papers exist on the topic. It’s clear that Microsoft needed to address this limitation of the operating system (or clever optimization, as some would call it). Unfortunately, a number of obstacles exist:

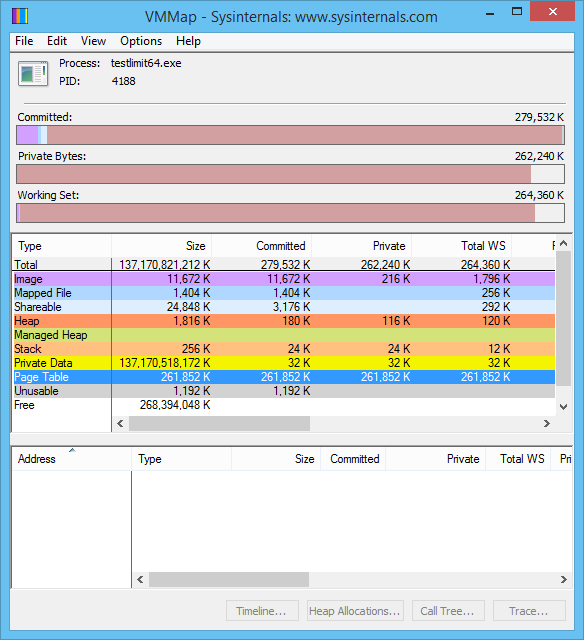

- Using virtual-mapped tables based on the EPROCESS structure (as Linux and OS X do) causes significant performance impact, as pointer chasing the different tables now causes cache misses and page translations. This becomes even worse when thinking about multi-processor systems, and the cache waste that this causes (where the TLB may end up getting filled with the various global (locked) pages corresponding to the page tables of various processes, instead of only the current process).

- Changing the address of the PTE array has a number of compatibility concerns, as PTE_BASE is actually documented in ntddk.h, part of the Windows Driver Kit. Additionally, once the new address is discovered, attackers can simply adjust their exploits to use the appropriate static address based on the version of the operating system.

- Randomizing the address of the PTE array means that Windows memory manager functions can no longer use a static constant or preprocessor definition for the address, but must instead access a global address which contains it. Forcing every processor to dereference a single global address every single time a virtual memory operation (allocation, protection, page walk, fault, etc…) is performed is a significantly negative performance hit, especially on multi-socket, NUMA systems.

- Dealing with the global variable problem above by creating cache-aligned copies of the address in a per-processor structure causes a waste of precious kernel storage space (for example, assuming a 64-byte cache line and 640 processors, 40KB of physical memory are used to replicate the variable most efficiently). However, on NUMA systems, one would also want the page containing this data to be local to the node, so we might imagine an overhead of 4KB per socket. In practice, this wouldn’t be quite as bad, as Windows already has a per-NUMA-node-allocated, per-processor, cache-aligned list of critical kernel variables: the Kernel Processor Region Control Block (KPRCB).

In a normal world, the final bullet would probably be the most efficient solution: sacrificing what is today a modest amount of physical memory (or re-using such a structure) for dealing with effects of global access. Yet, locating this per-processor data would still not be cheap: most operating systems access such a structure by relying on a segment register such as FS or GS on x86 and x64 systems, or use special CPU registers such as those located on CP15 inside of ARM processors. At the very least, this causes more pointer dereferences and potentially complex microcode accesses. But if we own the compiler and the output format, can’t we think outside the box?

Dynamic Relocation Generation

When the Portable Executable (PE) file format was created, its designers realized an important issue: if compiled code made absolute references to data or functions, these hardcoded pointer values might become invalid if the operating system loaded the executable binary at a different base address than its preferred address. Originally a corner case, the advent of user-mode ASLR made this a common occurrence and new reality.

In order to deal with such rebasing operations, the PE format includes the definition of a special data directory entry called the Relocation Table Directory (IMAGE_DIRECTORY_ENTRY_BASERELOC). In turn, this directory includes a number of tables, each of which is an array of entries. Each entry ultimately describes the offset of a piece of code that is accessing an absolute virtual address, and the required adjustment that is needed to fixup the address. On a modern x64 binary, the only possible fixup is an absolute delta (increment or decrement), but more exotic architectures such as MIPS and ARM had different adjustments based on how absolute addresses were encoded on such processors).

These relocations work great to adjust hardcoded virtual addresses that correspond to code or data within the image itself – but if there is a hard-coded access to 0xC0000000, an address which the compiler has no understanding of, and which is not part of the image, it can’t possibly emit relocations for it – this is a meaningless data dereference. But what if it could?

In such an implementation, all accesses to a particular magic hardcoded address could be described as such to the compiler, which could then work with the linker to generate a similar relocation table – but instead of describing addresses within the image, it would describe addresses outside of the image, which, if known and understood by the PE parser, could be adjusted to the new location of the hard-coded external data address. By doing so, compiled code would continue to access what appears to be a single literal value, and no global variable would ever be needed, cancelling out any disadvantages associated with the randomization of this address.

Indeed, the new build of the Microsoft C Compiler, which is expected to ship with Visual Studio 15 (now in preview), address a special annotation that can be associated with constant values that correspond to external virtual addresses. Upon usage of such a constant, the compiler will ensure that accesses are done in a way that does not “break up” the address, but rather causes its absolute value to be expressed in code (i.e.: “mov rax, 0xC0000000”). Then, the linker collects the RVAs of such locations and builds structures of type IMAGE_DYNAMIC_RELOCATION_ENTRY, as shown below:

typedef struct _IMAGE_DYNAMIC_RELOCATION_TABLE {

DWORD Version;

DWORD Size;

// IMAGE_DYNAMIC_RELOCATION DynamicRelocations[0];

} IMAGE_DYNAMIC_RELOCATION_TABLE, *PIMAGE_DYNAMIC_RELOCATION_TABLE;

When all entries have been written in the image, an IMAGE_DYNAMIC_RELOCATION_TABLE structure is written, with the type below:

typedef struct _IMAGE_DYNAMIC_RELOCATION {

PVOID Symbol;

DWORD BaseRelocSize;

// IMAGE_BASE_RELOCATION BaseRelocations[0];

} IMAGE_DYNAMIC_RELOCATION, *PIMAGE_DYNAMIC_RELOCATION;

The RVA of this table is then written into the IMAGE_LOAD_CONFIG_DIRECTORY, which has been extended with the new field DynamicValueRelocTable and whose size has now been increased:

ULONGLONG DynamicValueRelocTable; // VA

} IMAGE_LOAD_CONFIG_DIRECTORY64, *PIMAGE_LOAD_CONFIG_DIRECTORY64;

Now that we know how the compiler and linker work together to generate the data, the next question is who processes it?

Runtime Dynamic Relocation Processing

In the Windows boot architecture, as the kernel is a standard PE file loaded by the boot loader, it is therefore the boot loader’s responsibility to process the import table of the kernel, and load other required dependencies, to generate the security cookie, and to process the static (standard) relocation table. However, the boot loader does not have all the information required by the memory manager in order to randomize the address space as Windows 10 Redstone 1 now does – this remains the purview of the memory manager. Therefore, as far as the boot loader is concerned, the static PTE_BASE address is still the one to use, and indeed, early phases of boot still use this address (and associate PDE/PPE/PXE base addresses and self-referencing entry).

This clearly implies that it is not considered part of a PE loader’s job to process the dynamic relocation table, but rather the job of the component that creates the dynamic address space map, which has now been enlightened with this knowledge. In the most recent builds, this is done by MiRebaseDynamicRelocationRegions, which eventually calls MiPerformDynamicFixups. This routine locates the PE file’s Load Configuration Directory, gets the RVA (now a VA, thanks to relocations done by the boot loader) of the Dynamic Relocation Table, and begins parsing it. At this moment, it only supports version 1 of the table. Then, it loops through each entry, adjusting the absolute address with the required delta to point to the new PTE_BASE address.

It is important to note that the memory manager only calls MiPerformDynamicFixups on the binaries that it knows require such fixups due to the use of PTE_BASE: the kernel (ntoskrnl.exe) and the HAL (hal.dll). As such, this is not (yet) intended as a generic mechanism for all PE files to allow dynamic relocations of hard-coded addresses toward ASLRed regions of memory – but rather a highly vertically integrated feature specifically designed for dealing with the randomization of the PTE array, and the components that have hardcoded dependencies on it.

As such, even if one were to discover the undocumented annotation which allows the new version of the compiler to generate such tables, no component would currently parse such a table.

Sneaky Side Effects

A few interesting details are of note in the implementation. The first is that the initial version of the implementation, which shipped in build 14316, contained a static address in the loader block, which corresponded to the PTE base address that the loader had selected, and was then overwritten by a new fixed PTE base address (0xFFFFFA00`00000000 on x64).

The WDK, which contains the PTE_BASE address for developers to see (but apparently not use!) also contained this new address, and the debugger was updated to support it. This was presumably done to gauge the impact of changing the address in any way – and indeed we can see release notes referring to certain AV products breaking around the time this build was released. I personally noticed this change by disassembling MmGetPhysicalAddress to see if the PTE base had been changed (a normal part of my build analysis).

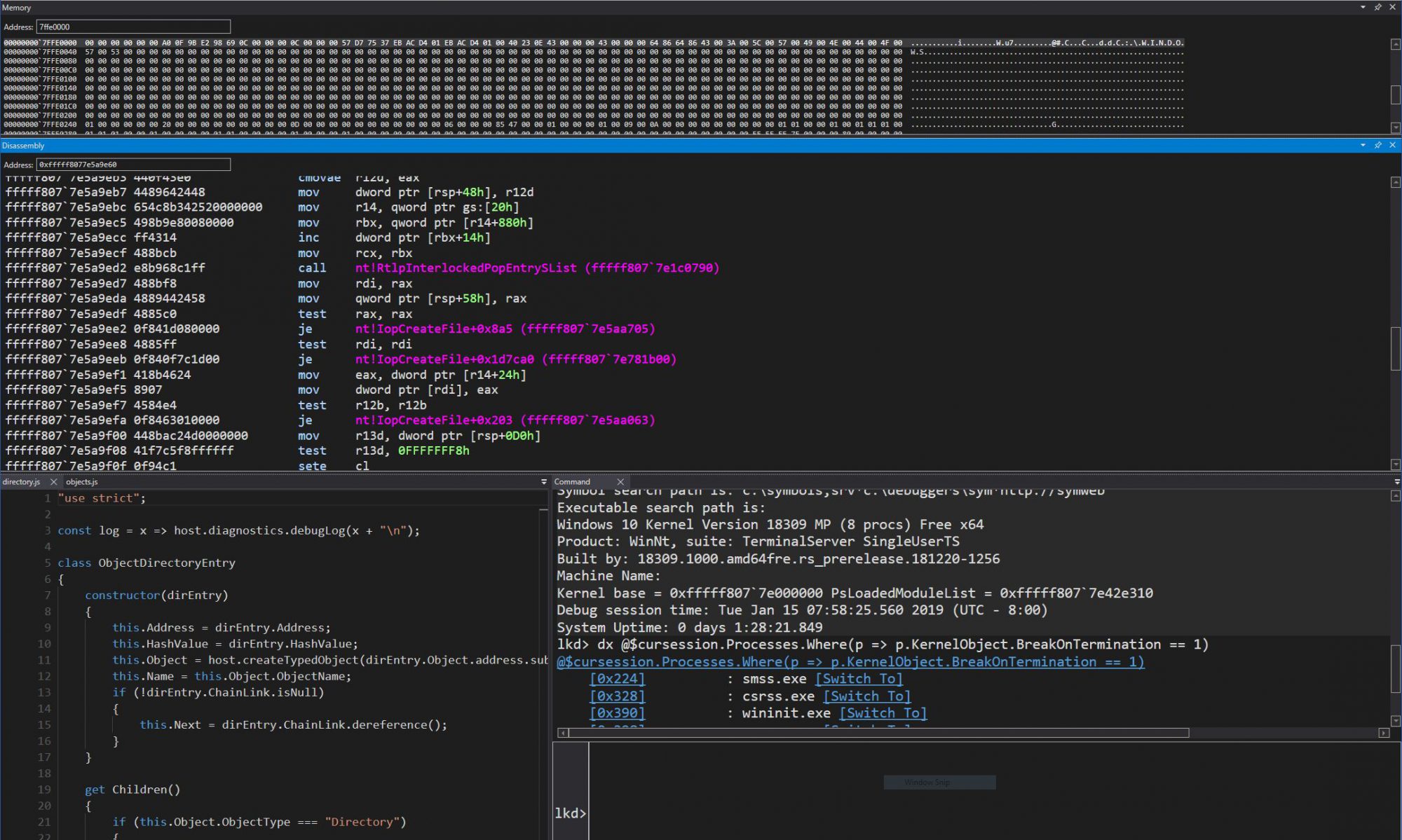

The next build, 14332, seemingly contained no changes: reverse engineering of the function showed usage of the same address once again. However, as I was playing around with the !pte extension in the debugger, I noticed that a new address was now being used – and that on a separate machine, this address was different again. Staring in IDA/Hex-Rays, I could not understand how this was possible, as MmGetPhysicalAddress was clearly using the same new base as 14316!

It is only once I unassembled the function in WinDBG that I noticed something strange – the base address had been modified to a different value. This led me to the hunt for the dynamic relocation table mechanism. But this is an important point about this implementation – it offers a small amount of “security through obscurity” as a side-effect: attackers or developers attempting to ‘dynamically discover’ the value of the PTE base by analyzing the kernel file on disk will hit a roadblock – they must look at the kernel file in memory, once relocations have been made. Spooky!

Conclusion

It is often said that all software engineering decisions and features lie somewhere between the four quadrants of security, performance, compatibility and functionality. As such, as an example, the only way to increase security without affecting functionality is to impact compatibility and performance. Although randomizing the PTE_BASE does indeed cause potential compatibility issues, we’ve seen here how control of the compiler (and the underlying linked object file) can allow implementers to “cheat” and violate the security quadrant, in a similar way that silicon vendors can often work with operating system vendors in order to create overhead-free security solutions (one major advantage that Apple has, for example).